Activation Functions in Artificial Neural Networks

Activation functions are an essential component of artificial neural networks (ANNs). That is because they are responsible for transforming the input signals of a neuron into an output signal.

Furthermore, by introducing non-linearity into the network, activation functions enable ANNs to learn complex patterns and solve a wide range of problems.

Moreover, we will explore their fundamentals, various types, and their importance in machine learning and deep learning.

Role of activation functions in artificial neural networks (ANNs)

In ANNs, neurons receive input signals from other neurons or external data sources, and they produce an output signal based on these inputs.

Even more, the activation function is responsible for determining the output signal by processing the weighted sum of the inputs and a bias term.

So, by applying an activation function, we can control the output of a neuron, determining whether it should be active or not, and to what extent.

They also introduce non-linearity into the network, which is essential for ANNs to learn complex, non-linear relationships between input features and output predictions.

Without them, ANNs would be limited to modeling only linear relationships, significantly reducing their applicability.

Importance of activation functions in machine learning and deep learning

They play a vital role in both machine learning and deep learning, as they are the primary means of introducing non-linearity into ANNs.

This non-linearity allows ANNs to learn from and model complex relationships, which is a key factor in the success of many modern machine learning applications, such as computer vision, natural language processing, and reinforcement learning.

Furthermore, activation functions influence the convergence of the learning process, affecting the speed at which the ANN learns, as well as its stability.

So, by selecting the appropriate activation function for a given problem, researchers and practitioners can optimize the performance of their ANNs and improve the effectiveness of their machine learning models.

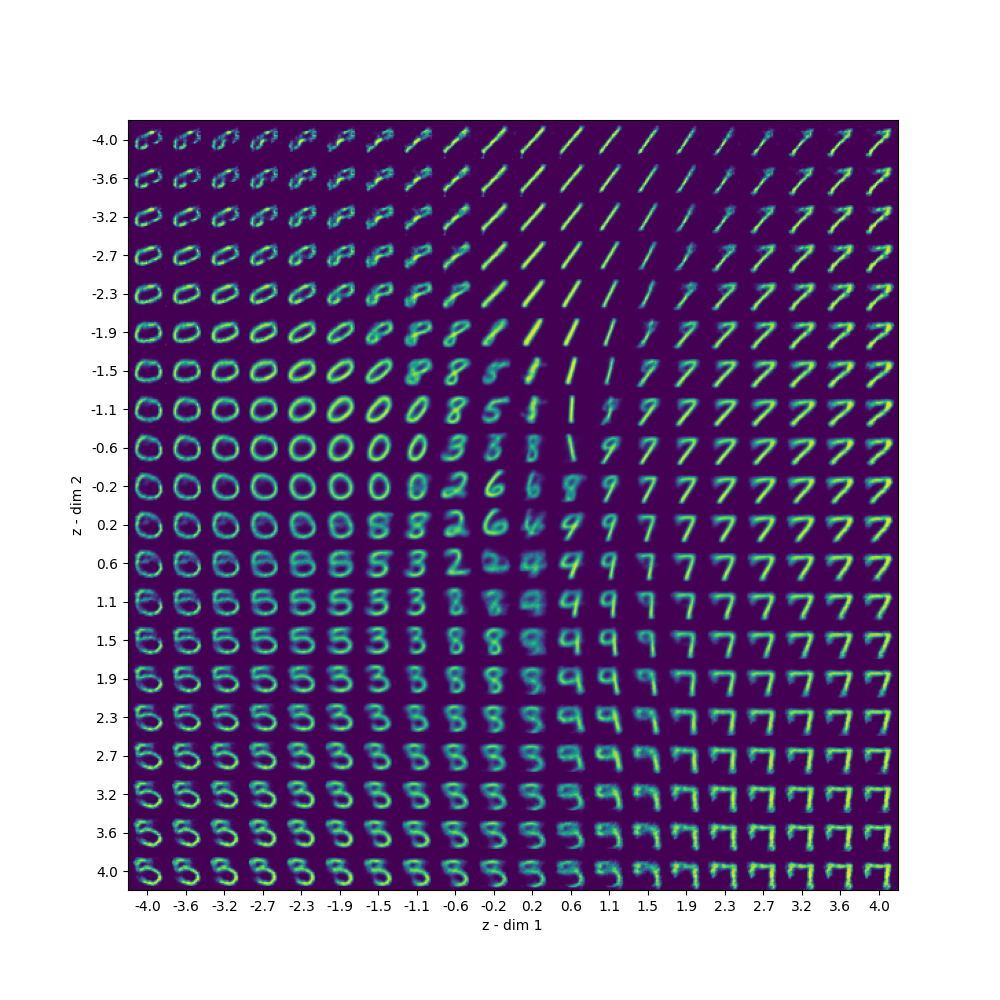

Types of activation functions

Linear activation functions

Linear activation functions are the simplest form of activation functions, in which the output is directly proportional to the input.

While not common in practice due to their limited ability to model complex relationships, they can be useful in certain situations, such as in the final layer of a regression-based neural network.

Non-linear activation functions

Non-linear activation functions introduce non-linearity into the network, allowing it to learn complex patterns and relationships.

Following are some of the most common we use today.

Sigmoid

The sigmoid function is a smooth, S-shaped curve that maps any input value to a range between 0 and 1.

It was popular in early neural networks but has fallen out of favor due to its susceptibility to the vanishing gradient problem.

Hyperbolic tangent (tanh)

The hyperbolic tangent (tanh) function is similar to the sigmoid function but maps input values to a range between -1 and 1.

It is also susceptible to the vanishing gradient problem but is generally considered better than sigmoid due to its zero-centered output.

Rectified Linear Unit (ReLU)

ReLU is a popular activation function defined as the max(0, x), where x is the input.

Moreover, it’s computationally efficient and helps mitigate the vanishing gradient problem.

However, it can suffer from “dying ReLU” problem, where some neurons become inactive and never fire.

Leaky ReLU and other variants

Leaky ReLU is a modification of ReLU that allows for a small, non-zero gradient when the input is negative, addressing the dying ReLU problem.

Other variants, such as Parametric ReLU (PReLU) and Exponential Linear Unit (ELU), also exist with similar goals.

Softmax

The softmax function is used in the output layer of classification tasks, as it converts raw scores into probabilities that sum to one.

It’s particularly useful in multi-class classification problems.

Swish

Swish is newer and has shown promising results in various tasks. It is a smooth, non-linear function that adaptively adjusts based on the input.

Adaptive activation functions

These adjust their behavior based on the input data or network state. For example, Parametric ReLU and Self-Normalizing Exponential Linear Units (SELU) fall into this group.

Characteristics and properties of activation functions

Continuity and differentiability

Most are continuous and differentiable, which is essential for gradient-based optimization methods like backpropagation.

Monotonicity

Monotonic functions preserve the order of input values in the output, which can be beneficial in certain situations.

Boundedness

Bounded functions have an upper and lower limit on their output values, which can help control the output range and improve network stability.

Non-linearity

Non-linearity is the key property that enables neural networks to learn complex relationships and model intricate patterns in data.

Derivative properties

The derivatives of activation functions play a crucial role in backpropagation and optimization. Some activation functions, like ReLU, have piecewise linear derivatives, making them computationally efficient.

Advantages and limitations of different activation functions

Computational complexity

Some are more computationally intensive than others, which can impact training time and efficiency.

Gradient vanishing and exploding problems

Functions like sigmoid and tanh can suffer from vanishing gradients, making it challenging to train deep networks.

Therefore ReLU and its variants have gained on popularity because they help to mitigate this issue.

Convergence speed and stability

Different functions can influence the speed of convergence and the stability of the learning process.

Initialization and optimization techniques

The choice of activation function can affect the initialization and optimization techniques we use during training.

For instance, specific weight initialization methods, like Glorot or He initialization, are tailored for specific activation functions to improve training efficiency and convergence.

Practical considerations and applications of activation functions

Selecting the right activation function for a specific problem

The choice of activation function depends on the specific problem and the architecture of the neural network. Experimentation and cross-validation are common approaches to find the most suitable activation function for a given task.

Combining activation functions in a single network

In some cases, it may be beneficial to combine different functions within a single network, either in different layers or for different groups of neurons.

This can help the network learn a diverse set of features and representations.

Activation functions in recurrent neural networks (RNNs) and convolutional neural networks (CNNs)

RNNs and CNNs, which are specialized neural network architectures for sequential data and image data, respectively, often use specific activation functions tailored to their unique requirements.

For example, Long Short-Term Memory (LSTM) cells in RNNs use a combination of sigmoid and tanh functions, while ReLU is a popular choice for CNNs.

Custom activation functions

In some cases, it may be necessary to design custom functions to suit the specific requirements of a given problem. This can involve modifying existing functions or developing entirely new ones.

Conclusion

Recap of the significance of activation functions in neural networks

They play a crucial role in the performance and behavior of artificial neural networks.

Furthermore, they introduce non-linearity, allowing networks to learn complex, non-linear relationships between input and output.

Throughout this essay, we have explored various types, their characteristics, and their advantages and limitations.

Future research and advancements in activation function design

As the field of deep learning continues to evolve, new activation functions are likely to be discovered and developed.

Researchers are constantly exploring novel activation functions that can address existing limitations, such as vanishing and exploding gradients, and improve the efficiency and stability of neural network training.

Additionally, adaptive activation functions that adjust their behavior based on the input data or during the training process may gain more prominence in the future.

Final thoughts on the role of activation functions in modern deep learning applications

They are a fundamental component of neural networks and have a significant impact on their performance.

Understanding their properties and selecting the appropriate activation function for a specific problem is essential for achieving optimal results.

As deep learning continues to advance and find new applications across various domains, the importance of activation functions in designing robust and efficient neural networks will remain paramount.