The Perceptron: A Building Block of Neural Networks

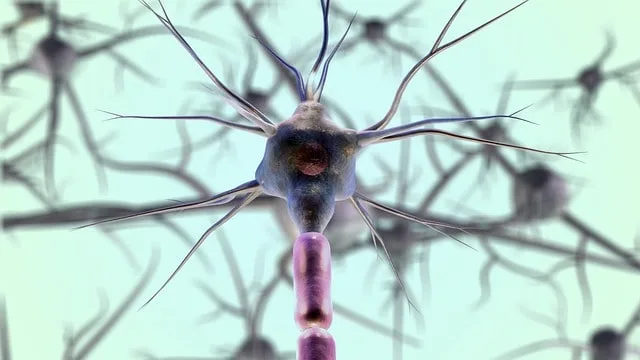

The perceptron is a type of linear classifier and a fundamental building block of artificial neural networks.

More specifically, it’s an algorithm for binary classification tasks, where it takes multiple input features, calculates a weighted sum, and produces a binary output.

Basically, it’s the simplest form of a neural network, consisting of a single neuron or node.

Historical context and development of the perceptron

The perceptron was first introduced by Frank Rosenblatt in 1957, inspired by earlier work on artificial neurons by Warren McCulloch and Walter Pitts.

Further, Rosenblatt designed perceptron as an electronic device, with the goal of mimicking the basic functioning of a biological neuron.

Additionally, it’s development marked a significant milestone in the history of artificial intelligence, as it demonstrated the potential for machines to learn and adapt.

Importance of the perceptron in artificial neural networks and machine learning

It laid the foundation for the development of more complex neural network architectures, such as multi-layer perceptrons, convolutional neural networks, and recurrent neural networks.

Moreover, it’s simplicity and ability to learn from data make it an essential component of many machine learning algorithms.

Despite its limitations, it has played a crucial role in advancing the field of artificial intelligence and deep learning.

Mathematical foundation of the perceptron

The perceptron model and its components

The perceptron model consists of input features, weights, and an activation function.

Furthermore, algorithm multiplies the input features by their corresponding weights, and calculates the sum.

Next, it passes this sum through an activation function, which determines the binary output.

The activation function

The activation function for the perceptron is typically a step function, such as the Heaviside step function or a sign function.

Moreover, this function returns a binary value based on whether the weighted sum of the input features is greater than or less than a threshold value.

The learning algorithm and weight updates

The perceptron learning algorithm updates the weights iteratively based on the training examples.

In addition, it updates them for each misclassified example by adding or subtracting a fraction of the input feature values.

Furthermore, the learning rate parameter controls the magnitude of these updates.

Convergence and limitations

The perceptron is guaranteed to converge to a solution if the data is linearly separable.

However, if the data is not linearly separable, the perceptron will never converge to a correct solution, which highlights one of its main limitations.

Advantages and limitations of the perceptron

Simplicity and ease of implementation

One of the main advantages is its simplicity, which makes it easy to implement and understand.

Even more, the learning algorithm is straightforward, and we can train the model relatively quickly.

Binary classification capabilities

It’s effective at solving binary classification problems, especially when the data is linearly separable.

Limitations in modeling complex relationships

However, it’s limited in its ability to model complex, non-linear relationships between input features and the output.

As a result of its linear nature, it has inability to learn higher-order interactions between features.

The XOR problem and the perceptron’s inability to solve it

This is a classic example of a problem that the perceptron cannot solve due to its linear nature.

To clarify, XOR function is not linearly separable, and thus the perceptron is unable to find a linear decision boundary to classify the data correctly.

Extensions and variations of the perceptron

Multi-layer perceptron (MLP)

Multi-layer perceptron (MLP) is an extension of the perceptron that includes multiple layers of neurons, allowing it to model more complex relationships.

Furthermore, MLP can learn non-linear decision boundaries, making it more powerful and versatile than a simple perceptron.

Radial basis function (RBF) networks

Radial basis function networks are another extension that use radial basis functions as activation functions instead of step functions.

Moreover, this change allows RBF networks to approximate any continuous function, increasing their ability to model complex relationships.

Support vector machines (SVM)

Support vector machines are a class of classifiers which we can classify as yet another extension.

Furthermore, SVMs maximize the margin between classes, making them more robust to noise and overfitting.

Additionally, they can handle non-linearly separable data through the use of kernel functions.

Convolutional neural networks (CNN) and recurrent neural networks (RNN)

Both convolutional neural networks (CNNs) and recurrent neural networks (RNNs) are extensions of the perceptron.

Moreover, they’re useful at handling specific types of data, such as image data for CNNs and sequential data for RNNs.

These specialized architectures have significantly advanced the field of deep learning.

Practical applications of the perceptron

Binary classification tasks

It’s well-suited for binary classification tasks, such as spam detection, medical diagnosis, and sentiment analysis.

Image recognition and computer vision

Although more advanced architectures like CNNs are now more common, they have been useful in image recognition and computer vision tasks, especially in the early days of neural networks research.

Natural language processing

They were also useful in natural language processing tasks, such as text classification and sentiment analysis.

However, more advanced models like RNNs, LSTMs, and transformers have since taken over as the state-of-the-art for these tasks.

Robotics and control systems

They have been used in robotics and control systems for tasks such as navigation, obstacle avoidance, and motor control.

The simplicity and ease of implementation of perceptrons make them suitable for applications where computational resources are limited.

Conclusion

Recap of the significance of the perceptron in machine learning

The perceptron is an essential building block in the development of artificial neural networks and machine learning.

It represents the foundation of more advanced models, such as MLPs, RNNs, and CNNs, which have driven significant advancements in the field.

Future research and advancements in perceptron-based models

Despite its limitations, it still remains a valuable tool in the machine learning toolbox. Even more, ongoing research continues to explore new variations, extensions, and applications of perceptron-based models.

Final thoughts on the role of the perceptron in modern machine learning applications

While more advanced neural network architectures have taken center stage in recent years, the perceptron remains an important and influential model in the history of machine learning.

Understanding the perceptron and its limitations provides valuable insight into the development and functioning of more complex models, as well as a greater appreciation for the ongoing evolution of the field.